Discover Shopify App Store – A Comprehensive Handbook 2024

Explore the Shopify App Store for tailored solutions to grow your business. Discover your perfect app today!

Cookies help us enhance your experience on our site by storing information about your preferences and interactions. You can customize your cookie settings by choosing which cookies to allow. Please note that disabling certain cookies might impact the functionality and features of our services, such as personalized content and suggestions. Cookie Policy

Cookie PolicyThese cookies are strictly necessary for the site to work and may not be disabled.

InformationThese cookies are strictly necessary for the site to work and may not be disabled.

| Cookie name | Description | Lifetime | Provider |

|---|---|---|---|

| _ce.clock_data | Store the difference in time from the server's time and the current browser. | 1 day | Crazy Egg |

| _ce.clock_event | Prevent repeated requests to the Clock API. | 1 day | Crazy Egg |

| _ce.irv | Store isReturning value during the session | Session | Crazy Egg |

| _ce.s | Track a recording visitor session unique ID, tracking host and start time | 1 year | Crazy Egg |

| _hjSessionUser_2909345 | Store a unique user identifier to track user sessions and interactions for analytics purposes. | 1 year | HotJar |

| _hjSession_2909345 | Store session data to identify and analyze individual user sessions. | 1 day | HotJar |

| apt.uid | Store a unique user identifier for tracking and personalization. | 1 year | Mageplaza |

| cebs | Store user preferences and settings. | Session | Mageplaza |

| cf_clearance | Store a token that indicates a user has passed a Cloudflare security challenge. | 1 year | Cloudflare |

| crisp-client | The crisp-client/session cookie is used to identify and maintain a user session within the Crisp platform. It allows the live chat system to recognize returning users, maintain chat history, and ensure continuity in customer service interactions. | Session | Crisp |

| _ga | Store a unique client identifier (Client ID) for tracking user interactions on the | 2 years | |

| _ga_7B0PZZW26Z | Store session state information for Google Analytics 4. | 2 years | |

| _ga_JTRV42NV3L | Store session state information for Google Analytics 4. | 2 years | |

| _ga_R3HWQ50MM4 | Store a unique client identifier (Client ID) for tracking user interactions on the website. | 2 years | |

| _gid | Store a unique client identifier (Client ID) for tracking user interactions on the website. | 1 day | |

| _gat_UA-76130628-1 | Throttle the request rate to Google Analytics servers. | 1 day |

Advertising cookies deliver ads relevant to your interests, limit ad frequency, and measure ad effectiveness.

InformationAdvertising cookies deliver ads relevant to your interests, limit ad frequency, and measure ad effectiveness.

| Cookie name | Description | Lifetime | Provider |

|---|---|---|---|

| _gcl_au | The cookie is used by Google to track and store conversions. | 1 day | |

| __Secure-3PAPISID | This cookie is used for targeting purposes to build a profile of the website visitor's interests in order to show relevant and personalized Google advertising. | 2 years | |

| HSID | This security cookie is used by Google to confirm visitor authenticity, prevent fraudulent use of login data and protect visitor data from unauthorized access. | 2 years | |

| __Secure-1PSID | This cookie is used for targeting purposes to build a profile of the website visitor's interests in order to show relevant and personalized Google advertising. | 2 years | |

| SID | This security cookie is used by Google to confirm visitor authenticity, prevent fraudulent use of login data and protect visitor data from unauthorized access. | 2 years | |

| APISID | This cookie is used by Google to display personalized advertisements on Google sites, based on recent searches and previous interactions. | 2 years | |

| __Secure-1PAPISID | This cookie is used for targeting purposes to build a profile of the website visitor's interests in order to show relevant and personalized Google advertising. | 2 years | |

| __Secure-3PSID | This cookie is used for targeting purposes to build a profile of the website visitor's interests in order to show relevant and personalized Google advertising. | 2 years | |

| SSID | This cookie is used by Google to display personalized advertisements on Google sites, based on recent searches and previous interactions. | 2 years | |

| SAPISID | This cookie is used by Google to display personalized advertisements on Google sites, based on recent searches and previous interactions. | 2 years | |

| __Secure-3PSIDTS | This cookie collects information about visitor's interactions with Google services and ads. It is used to measure advertising effectiveness and deliver personalised content based on interests. The cookie contains a unique identifier. | 2 years | |

| __Secure-1PSIDTS | This cookie collects information about visitor's interactions with Google services and ads. It is used to measure advertising effectiveness and deliver personalised content based on interests. The cookie contains a unique identifier. | 2 years | |

| SIDCC | This security cookie is used by Google to confirm visitor authenticity, prevent fraudulent use of login data, and protect visitor data from unauthorized access. | 3 months | |

| __Secure-1PSIDCC | This cookie is used for targeting purposes to build a profile of the website visitor's interests in order to show relevant and personalized Google advertising. | 1 year | |

| __Secure-3PSIDCC | This cookie is used for targeting purposes to build a profile of the website visitor's interests in order to show relevant and personalized Google advertising. | 1 year | |

| 1P_JAR | This cookie is a Google Analytics Cookie created by Google DoubleClick and used to show personalized advertisements (ads) based on previous visits to the website. | 1 month | |

| NID | Show Google ads in Google services for signed-out users. | 6 months |

Analytics cookies collect information and report website usage statistics without personally identifying individual visitors to Google.

InformationAnalytics cookies collect information and report website usage statistics without personally identifying individual visitors to Google.

| Cookie name | Description | Lifetime | Provider |

|---|---|---|---|

| _dc_gtm | Manage and deploy marketing tags through Google Tag Manager. | 1 year | |

| 1P_JAR | Gather website statistics and track conversion rates for Google AdWords campaigns. | 1 month | |

| AEC | 1 month | ||

| ar_debug | Debugging purposes related to augmented reality (AR) functionalities. | 1 month | Doubleclick |

| IDE | The IDE cookie is used by Google DoubleClick to register and report the user's actions after viewing or clicking on one of the advertiser's ads with the purpose of measuring the effectiveness of an ad and to present targeted ads to the user. | 1 year | Doubleclick |

| ad_storage | Enables storage, such as cookies (web) or device identifiers (apps), related to advertising. | 1 year | |

| ad_user_data | Sets consent for sending user data to Google for online advertising purposes. | 1 year | |

| ad_personalization | Sets consent for personalized advertising. | 1 year | |

| analytics_storage | Enables storage, such as cookies (web) or device identifiers (apps), related to analytics, for example, visit duration. | 1 year |

11-11-2024

If your website is like a house, the Robots.txt file is the rule when entering that house. The first thing when visitors come home is to read the rules of the house and to know whether the host allows it to visit or not.

Robots.txt is a text file that webmasters create to guide robots (search engine spiders) how to crawl and index pages on their site. Therefore, proper configuration of Robots.txt file is very important. If your website has sensitive information, do not want to the public, please set up here. In addition, the reasonable configuration also helps you very well in SEO.

Robots.txt has an important role in SEO. It helps search engines automatically reach the pages you want to search and index the page. However, most web pages have directories or files that do not require the search engine robots to visit. Adding robot files will greatly assist you in SEO.

Magento SEO Services

by Mageplaza

Let experienced professionals optimize your website's ranking

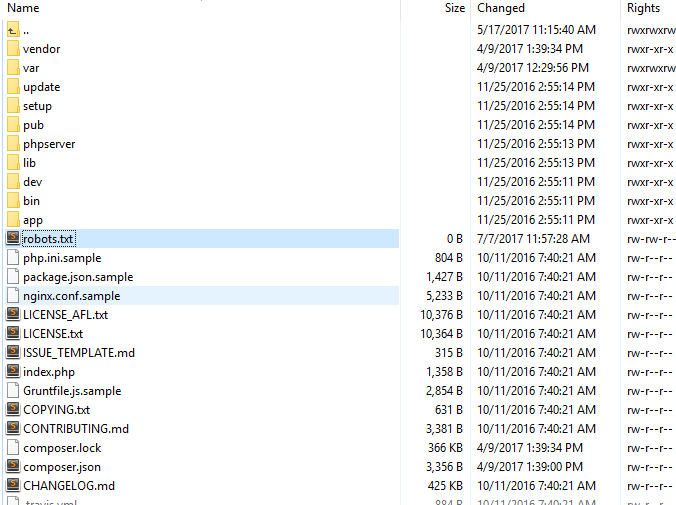

Learn moreIn essence, Robots.txt is a very simple text file placed in the host’s root directory. You can use any text editor to create. For example Notepad. Below is a simple robots.txt structure of WordPress:

When you re-use someone’s robots.txt or create your own robots.txt for your website, having some mistakes are inevitable.

If there is no Robots.txt, search engines will have a free run to crawl and index anything that they find on the website. However, creating Robots.txt will help search engines crawl from your site. So your website can be more appreciated.

Magento 2 SEO plugin from Mageplaza includes many outstanding features that are auto-active when you install it without any code modifications. Besides that, it is also friendly and helps your SEO better.

Related Post

Discover Shopify App Store – A Comprehensive Handbook 2024

Explore the Shopify App Store for tailored solutions to grow your business. Discover your perfect app today!

Top 10+ Shopify Store Name Generators: Ultimate Review Guide

By exploring the top 10+ Shopify store name generators, we aim to help you create unique and meaningful store names.

Should I Hire A Shopify Expert? Reasons, How-tos

This article will focus on the question “Should I hire a Shopify expert?”, unlock major reasons & practical tips to find the perfect fit for your business.

How to Make FAQ Page Shopify For SEO: A Comprehensive Guide

Confused by customer questions? This article aims to guide you how to make faq page Shopify that saves your time and boosts sales.

Top 10 Shopify ERP Solutions to Improve Operation Efficiency

Discover the top 10 Shopify ERP solutions to streamline your business operations, improve efficiency, and automate inventory management & order fulfillment.

Discover Shopify App Store – A Comprehensive Handbook 2024

Explore the Shopify App Store for tailored solutions to grow your business. Discover your perfect app today!

Top 10+ Shopify Store Name Generators: Ultimate Review Guide

By exploring the top 10+ Shopify store name generators, we aim to help you create unique and meaningful store names.

Should I Hire A Shopify Expert? Reasons, How-tos

This article will focus on the question “Should I hire a Shopify expert?”, unlock major reasons & practical tips to find the perfect fit for your business.

How to Make FAQ Page Shopify For SEO: A Comprehensive Guide

Confused by customer questions? This article aims to guide you how to make faq page Shopify that saves your time and boosts sales.

Top 10 Shopify ERP Solutions to Improve Operation Efficiency

Discover the top 10 Shopify ERP solutions to streamline your business operations, improve efficiency, and automate inventory management & order fulfillment.

Make sure your store is not only in good shape but also thriving with a professional team yet at an affordable price.

Get Started